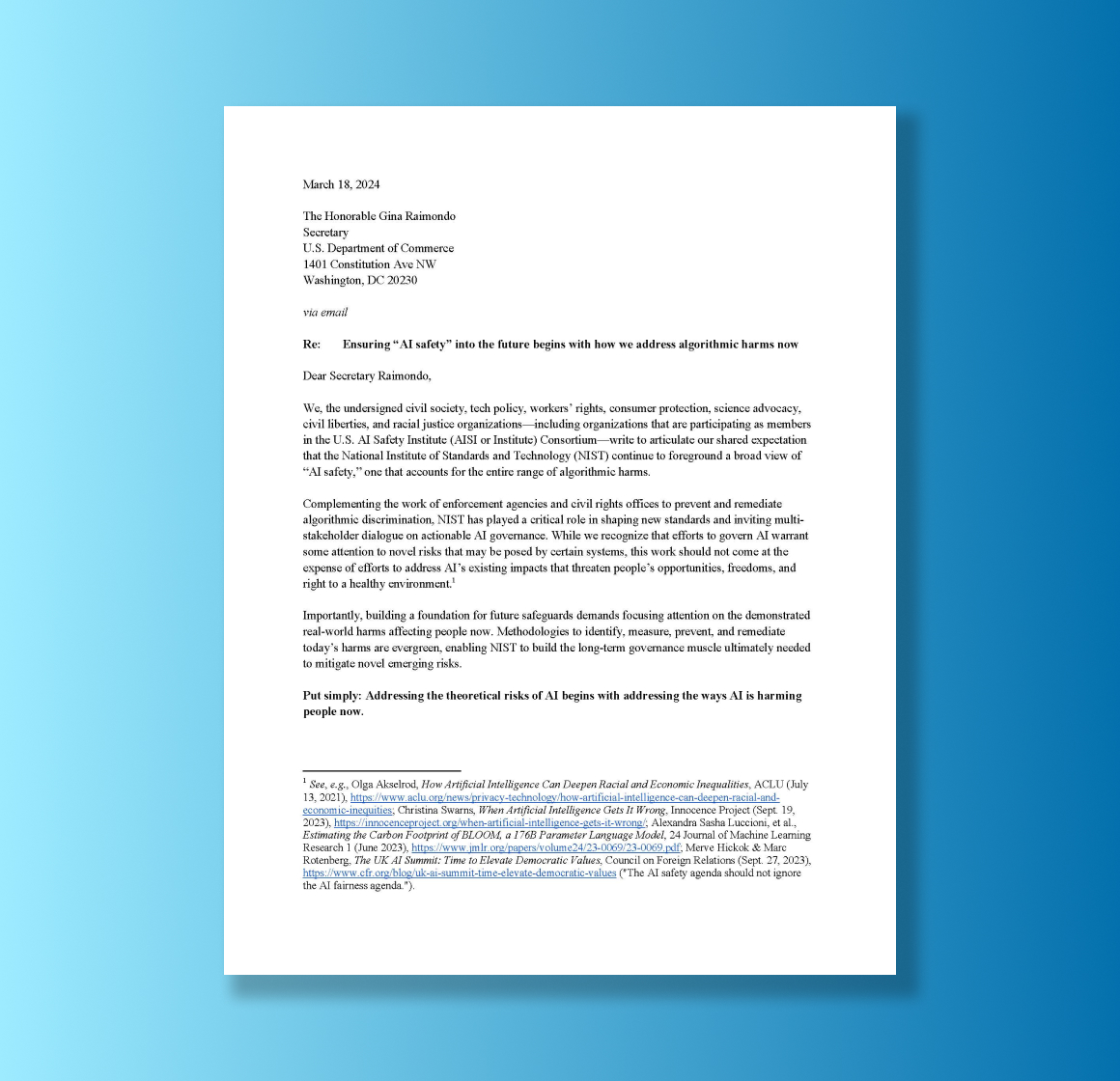

In response to a focus on AI’s more speculative “existential” risks by some in industry and government, a letter to US Secretary of Commerce Gina Raimondo signed by Data & Society — along with groups including the Center for Democracy & Technology, American Civil Liberties Union, AFL-CIO Technology Institute, AI Now Institute, Fight for the Future, Mozilla, and Public Citizen — insists that addressing the theoretical risks of AI begins with addressing the ways AI is harming people now. The letter specifically concerns the National Institute for Standards and Technology (NIST) and its AI Safety Institute, which are responsible for substantial portions of the recent AI executive order.

The letter serves to underscore the signing organizations’ shared expectation that the Institute continue to foreground a broad view of “AI safety” — one that accounts for the entire range of algorithmic harms — and to ensure that attention to emerging AI concerns does not overshadow the many well-known harms that warrant continued focus and investment.

Specifically, the signatories emphasize that NIST’s attention to more speculative AI harms should not compromise its track record of scientific integrity. They argue that the best way to approach the evolving set of risks posed by AI is to set evidence-based methodologies to identify, measure, and mitigate harms.