By Jesin James

May 6, 2026

When we interact with AI-based speech technology like Siri, Alexa, or Google Assistant, we often make adjustments: we slow down, pronounce words carefully, switch to English, or suppress emotions so that we are better understood. But what if these systems were designed to not just understand our words, but our cultural ways of speaking, feeling, and being?

Emotion recognition is a growing branch of AI that interprets how people feel from cues in their voice, facial expressions, or behavior. Because most studies on emotions are based on dominant languages and cultures — most commonly, English — these systems are largely built on data from those same languages, and carry assumptions about how emotions are expressed in them. When people attempt to develop technology for less dominant languages, they tend to use English emotion terms as they are, or simply translate them to the other language. This leaves out a whole range of experience, and means Indigenous and under-represented communities are not just excluded from the development of AI, but at risk of being misrepresented by it.

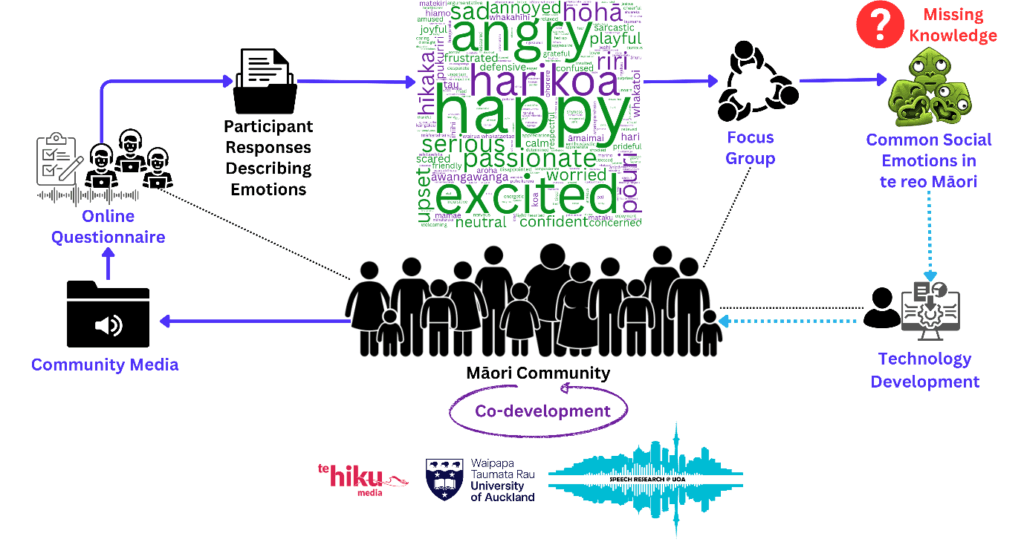

The Speech Research @ UoA Research Group at the University of Auckland and Te Hiku Media, New Zealand are collaboratively taking a different path. Te reo Māori (the language Māori) is the indigenous language of Aotearoa New Zealand. In our aim to understand te reo Māori emotions and build technology for those emotions, we are working with Māori communities at all stages of this project. We have started this process with Māori speakers, elders, and experts (see image above), a consultation that is happening at every stage of the project. This collaborative, culturally grounded, and community-led approach is not just about building better AI. It is about making sure that the benefits of technology development reach back to the community.

The Problem with Universal Emotion AI

Emotion recognition research is well-established in a few dominant languages, notably English, German, and Mandarin Chinese. Systems are typically trained using the six “basic” emotions: happiness, sadness, anger, fear, surprise, and disgust. Though these categories have long been assumed to be universal, that assumption has come under increasing scrutiny.

Several psychological and linguistic studies have challenged the idea that these categories correctly map onto every language or culture. For example, many cultures describe emotions in ways that reflect social relationships, spiritual beliefs, or communal values, rather than internal states alone. In te ao Māori (the Māori world), emotions are relational and often embodied, connected not just to individuals but to whakapapa (genealogy), whenua (land), and whānau (family). Literal translations of Māori emotional terms into English often fail to capture their full meaning. Terms like whakamā (shame) carry cultural nuances that are easily lost when forced into English categories. This raises critical questions: What does emotion recognition mean in a Māori context? How can we build systems that reflect Māori ways of feeling, rather than imposing imported frameworks?

Listening First: Co-Designing with Community

Our project did not begin with datasets or algorithms, but with conversations.

The conception of the project came during discussions with Te Hiku Media, a Māori media company and a world leader in indigenous technology development. They highlighted that little is known about how emotions are expressed in te reo Māori, which led us to collaboratively explore, for the first time, what emotions mean within an Indigenous language context.

We carried out an online questionnaire asking Māori speakers about the emotions they perceived in Māori media, provided as audio clips. Due to the prevalence of English in New Zealand — a consequence of colonization — all te reo Māori speakers are also bilingual speakers of English. So, the participants of the questionnaire had the option to report the emotion terms in either language. This resulted in the 216 emotion terms, in both languages, shown in the word cloud above. This was followed by a series of focus group discussions with Māori speakers, aimed at gathering insights on how emotions are experienced, expressed, and understood in te reo Māori. These sessions were co-designed with Māori researchers and community members, ensuring that the questions we asked, the way we asked them, and the way we interpreted responses were culturally grounded.

Through this process, we identified 16 Māori emotion categories, which was the missing knowledge we were seeking at the beginning of this project. Some of these overlap with the so-called universal set, while others reflect unique cultural concepts or relational dynamics. For example, we identified harikoa (happy) and pōuri (sad), which align with universal emotion categories, whereas categories like pai (good, well), which lacks a direct English equivalent, and aroha (love), a culturally rich concept often absent from mainstream emotion research, highlight important cultural distinctions. This new set provides a culturally meaningful foundation not just for future emotion recognition models, but for teaching, language revitalization, and broader cultural understanding.

Importantly, the process was reciprocal. Participants shaped the research design, provided context for interpreting emotions, and guided the language used in our recordings. This was not just data collection; it was research grounded in relationship-building (whakawhanaungatanga).

Building a Māori Emotional Speech Corpus

With these 16 emotion categories in hand, we set out to create the first database of emotional speech in te reo Māori. We worked with a professional Māori-speaking voice actor to record 224 sentences that expressed each of the 16 emotional states. The recordings were carefully designed to sound natural and emotionally expressive, while also being phonetically balanced for future analysis. It allows us to train and test models that can recognise emotional cues in te reo Māori speech.

We then tried to find patterns in how emotions sound when spoken in te reo Māori, an analysis that has never been done before for an Indigenous language. To do this, we looked at features of speech such as pitch, loudness, and voice quality, to see if different emotions can be told apart just by how they sound. We also used special tools to help us visualize how similar or different these emotions are from each other based on the way they are spoken.

The preliminary results are promising: many of the Māori emotion categories show distinct acoustic profiles, indicating that these emotions are not only culturally meaningful but also detectable in speech signals. There are also Māori emotions that show similarity in behavior to English emotions, needing further exploration.

Why This Matters: Equity, Representation, and AI Justice

Speech technology is becoming deeply embedded in our lives, from voice assistants and customer service bots to language learning apps and healthcare tools. But if these systems are trained only on dominant languages and cultural assumptions, they risk reinforcing existing inequalities.

Indigenous communities around the world have long been excluded from the design of new technologies. Yet these same communities often hold rich knowledge about communication, emotion, and language that could enrich AI. Our project is a step towards a different kind of AI, one that begins with community, respects cultural knowledge, and recognizes that language is more than just data. It is about identity, belonging, and the opportunity to be understood on your own terms.

A Collaborative Effort

This work is the result of collaboration across disciplines and worldviews. It is being led by Dr. Jesin James, alongside Dr. Ake Nicholas (Ngāti Te’akatauira, Ngā Pū Toru), Dr. Gianna Leoni (Ngāti Kura, Ngāi Takoto, Te Aupōuri), Professor Catherine Watson, and Associate Professor Peter Keegan (Waikato-Maniapoto, Ngāti Porou). Dr. Leoni is from Te Hiku Media, and all other researchers are from the University of Auckland. Himashi Rathnayake, a PhD candidate at the University of Auckland, has worked to bring this project to a reality by incorporating feedback from all members of the team. We all come from different backgrounds such as engineering, linguistics, Māori studies, Māori data science, education, and social work.

We are also grateful for the support and and partnership of Te Hiku Media (Te Reo Irirangi o Te Hiku o te Ika) and New Zealand’s Science for Technological Innovation (SfTI) National Science Challenge, which provide not only infrastructure but community links and a shared vision for Māori-led digital futures.

Looking Ahead

This is just the beginning. The emotional speech corpus we have created will be expanded and refined with more voices and community input. We aim to develop machine learning models that can recognize Māori emotions to support culturally informed applications in education, health, and digital media. We hope that this work will inspire similar efforts in other Indigenous and under-resourced languages. By democratizing AI, we not only make better technology but also make more inclusive, and more human systems. We believe this begins with a simple idea: that every voice, and every emotion, deserves to be heard.

Dr Jesin James is a senior lecturer at the Faculty of Engineering and Design, University of Auckland, working where language meets technology. Her research spans speech signal processing, machine learning, and engineering education, with a strong focus on reducing bias in speech technologies. She works with low‑resourced Indian and Pacific languages, including Malayalam, te reo Māori, Cook Islands Māori, and New Zealand English to build more inclusive and human‑centred systems. As an early career researcher, Jesin has actively involved in national and international projects in this space, alongside regular invited talks, media, and funded research initiatives. She is also passionate about bringing language communities and researchers together, and has co‑organized Māori and Pacific languages speech technology gatherings to share knowledge, spark collaboration, and center community voices. Guided by the philosophy of ako, Jesin is passionate about creating technology and classrooms that learn from people, not just data.