Chatbots are reshaping not only how people seek help, but how they define it, researcher Briana Vecchione writes.

Chatbots are reshaping not only how people seek help, but how they define it, researcher Briana Vecchione writes.

August 6, 2025

In the past few years, we’ve seen a quiet shift unfold: more and more people are turning to chatbots not just for entertainment or productivity, but for emotional support. Whether it’s ChatGPT, Replika, or another AI companion, users are increasingly engaging these tools in ways that look and feel a lot like therapy. They ask for advice. They share secrets. They seek comfort. Some even describe their chatbot as “better than my therapist.”

This shift raises urgent questions, especially for those of us who understand the limitations of these technologies. AI chatbots mirror users back to themselves in unpredictable ways, and their fluency can obscure the fact that they don’t actually understand, listen, or care — and sometimes even “hallucinate” false information. Yet people still turn to them; not just once, but regularly. And not just for information, but for help.

Our research team wanted to know why. We set out to explore what people actually do with mental health chatbots and how those interactions shape users’ emotional lives, relationships, and sense of self. We weren’t only interested in evaluating chatbot performance. We wanted to understand how people interpret the “support” they receive from these tools, and what those interpretations reveal about their needs, desires, and constraints.

This project began as a collaboration between me, a computer and information scientist, and Livia Garofalo, a medical anthropologist focused on therapy and the platform economy. Since then, it has grown into a broader interdisciplinary effort with our colleagues Ranjit Singh, who brings expertise in computer science, engineering, and science and technology studies, and Emnet Tafesse, who brings expertise in political science and public policy.

We started with a fairly straightforward question: When and why do people use chatbots for emotional support?

For some, the answer was crisis: A panic attack at 2:00 a.m., or a surge of spiraling thoughts when no friends were awake to call on. For others, it was boredom, loneliness, or the slow seep of daily stress. People often use bots as a supplement to traditional therapy, but some users also turn to them as a substitute when they cannot afford therapy, access it, or trust it. Still, others described their chatbot use not as therapy at all, but as a kind of mindful journaling or emotional processing.

What these different patterns suggest is that people don’t treat these tools as static “products.” Instead, they integrate them into existing care routines in flexible, sometimes surprising ways. Understanding those routines helps us see chatbot use as less of a replacement for care and more as a window into what people are looking for in particularly challenging moments of their lives.

Next, we examined how people perceive the type of support chatbots provide. Do they see it as genuine care, a stopgap, or something else entirely?

It turns out it depends on how they position the chatbot in their own lives.

Some users recognized the chatbot as “just a tool,” but still described it as meaningful. Others attributed a surprising amount of emotional weight to their interactions. They talked about “feeling heard,” “seen,” or “understood” after chatting. What mattered to these users wasn’t whether they perceived the bot as “real,” but the ways they drew on it to make sense of their own inner lives. Even when users knew the bot wasn’t conscious, they still assigned it roles like coach, sounding board, guide, and even friend. These roles shaped how they interpreted its words, and in turn, how they interpreted themselves.

Perhaps the most intimate theme that emerged was shame.

Many participants turned to chatbots to share things they didn’t feel comfortable sharing with other people, sometimes even with a therapist. One person said they didn’t want to “burden” their loved ones with their feelings; another didn’t want to be “the friend who’s always complaining.” Although chatbots are interactive agents, participants often described them less as social others and more as spaces — a kind of emotional container where they felt “no shame” and didn’t have to worry about “judgment.” The bot became not just someone to talk to, but a neutral place to unload without fear of reaction.

This was especially true for users processing stigmatized emotions, trauma, or identity issues. The chatbot served not just as a neutral listener but as a shield: a place where emotional expression could happen without consequences, and vulnerability felt private and contained.

Several users described chatbot use as more manageable than therapy. One participant used the metaphor of “shining a flashlight” to describe what interacting with a bot did to their emotions, rather than the therapeutic experience of turning on “all the lights.” In this way, bots offer a kind of “auto-intimacy,” or a way to engage in self-understanding without the exposure that human connection sometimes demands.

Of course, these interactions don’t happen in a vacuum. Chatbots come with real ethical and emotional risks. So we also wanted to know: How much do users know about these systems, and how much do they care?

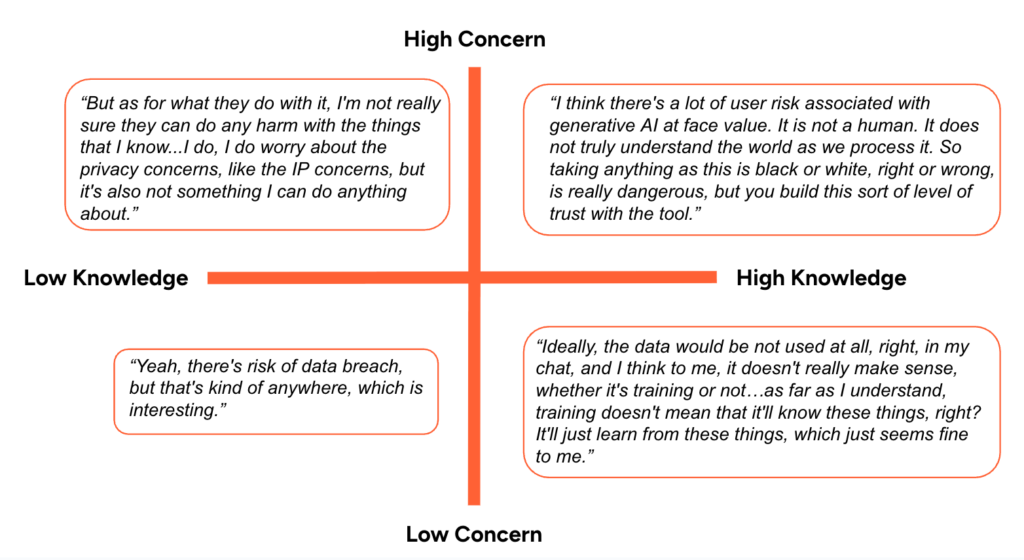

Here, we saw people moving between different intersections of knowing and caring. Their responses formed a grid; knowledge and concern moved on separate axes that intersect in surprising ways.

Figure 1: Axis of Knowing vs. Caring — How much do users understand how the system works, vs. how much do they care about ethical/privacy issues?

Some users knew very little about how LLMs work or how their data might be used in relation to them, but they were concerned about privacy and safety. Others were well-informed but relatively unconcerned about those issues, accepting the tradeoff as the price of access. Still, others expressed a kind of resigned ambivalence: they did care, but felt powerless. “There’s nothing I can do about it,” one person said. “Yeah, there’s risk,” said another, “but that’s everywhere.”

This gap between knowing and caring was striking. It wasn’t enough for people to be informed about the risks, what mattered was how that knowledge translated into feelings, values, and behaviors. Some used the bot cautiously, while others used it heavily despite their concerns. Many fell somewhere in between, negotiating emotional needs against ethical discomfort in real time.

If there’s one thing our early findings make clear, it’s that chatbot use is not just about the bots. It’s about people: how they navigate their emotional worlds, how they make sense of themselves, and how they seek support in a system that often fails them.

Some use chatbots because they’re free or fast. Some because they’re always available. Some because they don’t talk back. However, almost all the people we interviewed are using these tools to perform emotional work — to feel something, understand something, or cope with something.

These interactions raise questions not just about chatbot design or regulation, but about the nature of care itself. What does it mean when a machine feels safer than a friend? What does it say about our systems when users turn to a language model to feel less alone? And what happens when that model changes, breaks, or disappears?

Our study is still ongoing. We’ve just completed our first round of interviews, and we’re launching a diary study and focus groups this summer. But already, it’s clear that chatbots are reshaping not only how people seek help, but how they define it.

We’re grateful to our participants for letting us into their private world of interactions with chatbots, and we look forward to learning more. If you’re doing related work or are just curious, we’d love to hear from you at [email protected]. For us, this is just the beginning of a much larger conversation.